Same Prompt, Better Results: How One Founder Built a Self-Improving Sales Pipeline

11 agents manage Deskimo's BD pipeline. The agent that runs today is meaningfully different from the one they shipped. Same prompt, same integrations, but three weeks of editorial judgment get built into every draft using a learning loop powered by Hyperagent's memories.

Deskimo is growing its business development pipeline with agents. Manual review of outbound email drafted by agents dropped from 100% to 10-20% in three weeks.

- Deskimo built an eight-agent BizDev pipeline on Hyperagent in one week. It handles prospecting, outreach, and reply management across ten countries in five languages.

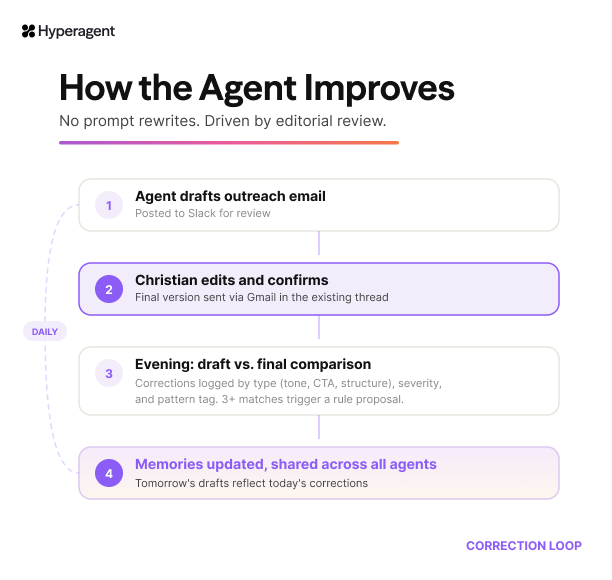

- The correction loop compares the agent's drafts to the versions the founder actually sends. The agent reads the gap and adjusts. No prompt rewrites.

- In three weeks, the founder went from editing 100% of outreach emails to approving 80-90% without changes.

Christian hasn't updated his agent's prompt in three weeks. The emails keep getting better anyway.

Every night, the agent reviews two sets of data: the outreach it drafted, and the versions Christian actually sent. It reads the gap between them. Noticing where he softened a pitch, or replaced a generic closer with a specific question. The agent adjusts. The next set of drafts reflect what it learned.

Christian is a co-founder at Deskimo, a co-working space marketplace operating across ten countries. Before the agents, business development was a patchwork. BDMs, country managers, and co-founders all took turns. Language barriers meant founders stepped in for markets where local hires struggled. Outreach quality varied from person to person, market to market.

When he started using Hyperagent, he built eight agents that run the entire BD pipeline. It took about a week. The correction loop is what compounds.

The Core Ideas That Shaped Deskimo’s Agents

Christian aligned around two principles early.

- An agent doesn't need to be right on day one. It needs to get better every week without anyone rewriting the prompt. Christian edits the agent’s drafts the way he'd manage a junior hire. The correction signal comes from that normal review, not from prompt engineering.

- One agent's correction should improve every agent in the pipeline. When the QA agent discovered that .gov.tw domains bounced 71% of the time, the research agent stopped sourcing them. That lesson didn't require a meeting or a config change. It propagated through Hyperagent’s shared memory.

The Predictable Parts Are Easy. The Unexpected Needs an Agent.

Deskimo is a two-sided marketplace. Supply is finite: there are only so many co-working spaces in any city and the bottleneck has always been the same. Find spaces in new markets, identify the person who makes listing decisions, and convince them to join.

The predictable parts of outreach are easy to automate. A new space appears on a list, send an intro. No reply after five days, send a follow-up. Any workflow automation tool handles this.

The unpredictable parts are where it breaks. A workspace manager in Hamburg replies asking about revenue share. Another says they're interested but not until renovations finish in September. A third copies a colleague who handles partnerships. Each reply requires a different response. Rule-based automations would treat them all the same.

Christian needed something that could read a reply, understand the context, and decide what to say next. And he needed it to get better at those decisions over time, without him rebuilding the workflow every week.

Building an Agent that Learns Your Business

Before the agent could start pitching, it needed to know the business. So Christian connected Hyperagent to the tools his business runs on for shared context.

For outreach, the agent knows Deskimo's partnership pitch lives in Google Drive: its offerings, how the revenue model works, and what onboarding looks like. When it writes to a space in Germany, it selects the German-language presentation. The UK gets a different version.

The localization goes deeper than translation: each market has different playbooks with different selling points, objections, and conversational norms. A pushback from a municipal Gründungszentrum in eastern Germany requires a different response than a soft decline from a commercial operator in Auckland.

Corrections an agent can study

Before deploying his agents, Christian set up the most crucial step, the feedback loop. Every evening, a scheduled feedback loop pulls the day's approval threads and compares draft versus final.

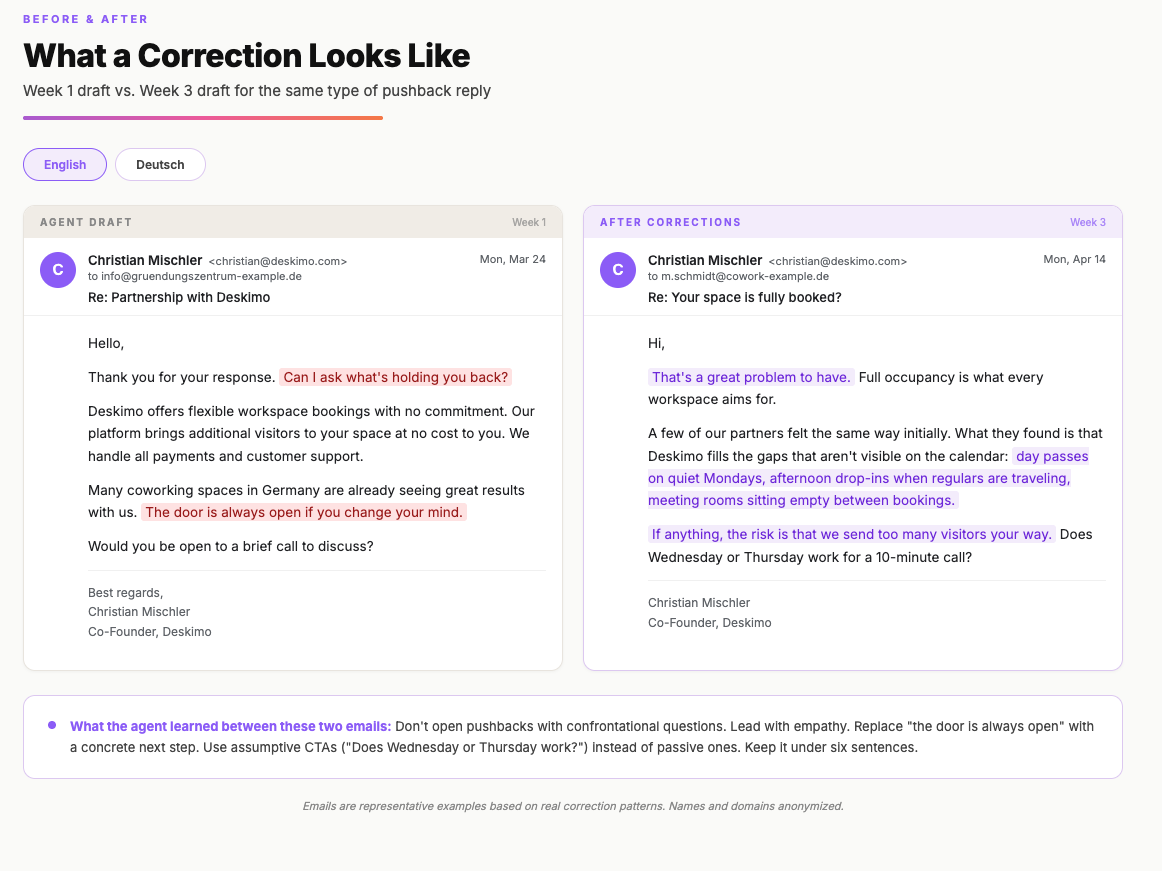

Of 12 draft approvals reviewed in the first backfill, 10 were sent without changes. The two that were edited both taught the system something specific: don't open pushbacks with confrontational questions, and don't close with passive "the door is always open" language. Instead, offer a concrete next step.

The agent tracks corrections in a structured log, categorizing each one by type, severity, and a reusable pattern tag. When the same pattern appears three or more times, the agent proposes a rule change. Small formatting fixes get applied automatically. Tone and messaging changes get posted to Slack for approval.

Early on, the agent restarted email threads instead of replying inline, and once dropped a cc'd colleague from a reply. The feedback loop caught both within days.

The outreach agent also runs structured A/B tests. Four variants per language, each running until it crosses 30 sends. Weekly, the agent drops the lowest-performing variant, takes the best one, and tweaks a single variable: a subject line, an opening sentence, a CTA. Then the cycle repeats.

Early on, the agent drafted a pushback reply to a municipal co-working center that had declined. The draft opened with: Can I ask what's holding you back?

Christian rewrote the entire email. His version opened with empathy, added reassurance, a playful confidence line about "sending too many visitors," and a softer call to action. The confrontational question was gone.

A week later, when a different workspace said they were fully booked, the agent's draft led with understanding rather than interrogation. It had learned the pattern. Not because someone rewrote the prompt, but because the correction was logged, categorized, and absorbed.

Memory that persists

After three weeks of corrections, the agent carries accumulated knowledge about what good outreach looks like for each market.

- Loss aversion works better than gain framing.

- Emails longer than six sentences get ignored. Assumptive CTAs ("Does Wednesday or Thursday work?") outperform passive ones ("Would you be interested in a chat?").

- Faster follow-up cadences signal genuine interest.

- Always acknowledge declines before archiving.

- When contacting a second location of a brand, reference the prior outreach.

That knowledge persists across sessions. Monday's agent remembers what Sunday's review taught it, which remembers what the week before taught it.

Eight Agents, One Pipeline

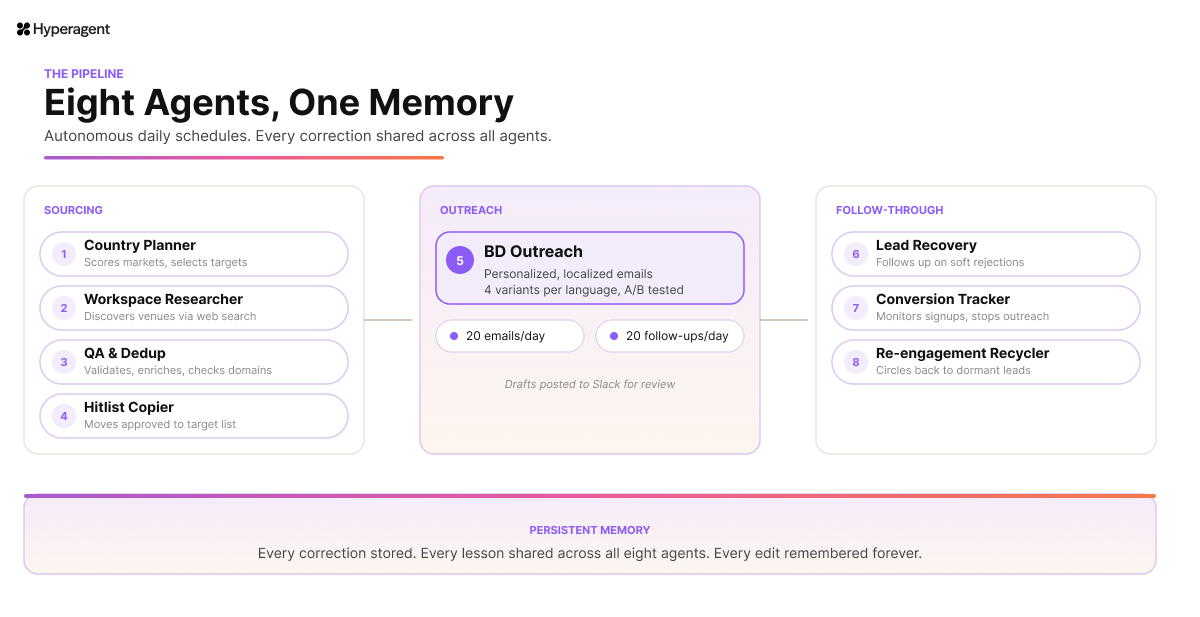

The outreach agent doesn't work alone. It sits in the middle of an eight-agent pipeline that handles everything from market selection to partner onboarding, running autonomously on daily schedules.

Sourcing

The Country Planner agent scores markets by coverage gaps and growth potential, then passes target countries to a Workspace Researcher agent that discovers new venues through web search and browser automation. A Quality Assurance agent validates each venue, checks eligibility, enriches missing data, and runs Mail Exchanger record lookups to catch dead email domains before they waste an outreach slot.

Outreach

Approved venues flow into the target list. The Outreach Agent sends personalized, localized emails with the correct partnership presentation attached. Four template variants per language test different approaches: feature-led, revenue-focused, social-proof, and ultra-short loss-aversion.

Follow-through

A Lead Recovery agent handles soft rejections with relevant follow-up questions. A Conversion Tracker agent monitors Deskimo's partner portal for completed signups and stops all outreach to converted spaces. And lastly, a Re-engagement Recycler agent circles back to dormant leads after a cooling period.

The layer underneath all eight

None of these agents operate in isolation. Every correction, every learned pattern, every rule that emerges from the weekly feedback loop gets stored in Hyperagent's persistent memory and shared across the entire pipeline.

When the outreach agent learns that confrontational openers kill replies, the recovery agent learns it too. The Country Planner's market scores reflect conversion data from the outreach agent's A/B tests.

This is the part that makes the pipeline more than eight automations running in parallel. Each agent gets smarter because every other agent got smarter first. And the person training them doesn't configure eight separate systems. They edit emails over coffee, and the corrections propagate.

Every edit Christian makes is a lesson that eight agents learn once and never forget.

What Happens When Memory Compounds

Christian built the full pipeline in about a week. The correction loop has been running since. The agent he has today is meaningfully different from the one he started with. Same prompt, same integrations, same scheduled runs, but three weeks of his editorial judgment built into every draft.

Most businesses that automate outreach are optimizing for volume. Send more, faster. The correction loop optimizes for something different: judgment. The agent doesn't just send more emails. It sends better emails, and gets better at sending them, and the person training it doesn't have to learn a single thing about prompt engineering to make that happen.

Build your pipeline with agents build on Hyperagent →